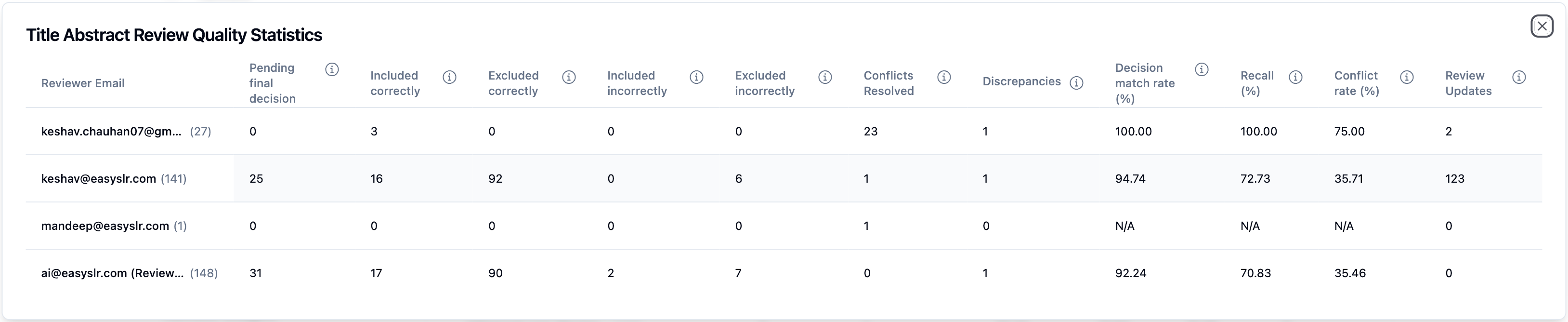

Revamped Quality Statistics Dashboard

We’re excited to introduce the Revamped Quality Dashboard — designed to help teams monitor and maintain reviewer performance across systematic literature reviews. This feature ensures your review workflow stays consistent, accurate, and aligned with project protocols.

Key Highlights

Reviewer Performance Evaluation

The Quality Dashboard evaluates each reviewer’s adherence to screening guidelines, including PICOS criteria and project-specific inclusion/exclusion rules. This is particularly valuable in projects with multiple reviewers (2 humans + AI, or 1 human + AI).Track Critical Metrics

The dashboard monitors essential metrics to ensure workflow integrity and identify areas for improvement:

Pending Final Decision: Articles awaiting final decisions from all required reviewers.

Included Correctly: Articles correctly included.

Excluded Correctly: Articles correctly excluded.

Included Incorrectly: Articles incorrectly included (should have been excluded).

Excluded Incorrectly: Articles incorrectly excluded (should have been included).

Conflicts Resolved: Number of decision conflicts resolved by the reviewer.

Decision Match Rate (%): Percentage of reviewer decisions matching the final decisions.

Recall (%): Percentage of included articles correctly identified.

Conflict Rate (%): Percentage of decisions that resulted in conflicts with other reviewers (excluding the lead reviewer).

Review Updates: Total Number of decisions changes by a reviewer.

Data Transparency & Guidance

All metrics are calculated based on the final decision for each article. Hover over the ‘i’ icon next to any metric to see detailed descriptions and calculations.