Conflict Analysis Now Includes Recall, Accuracy & Conflict Rate

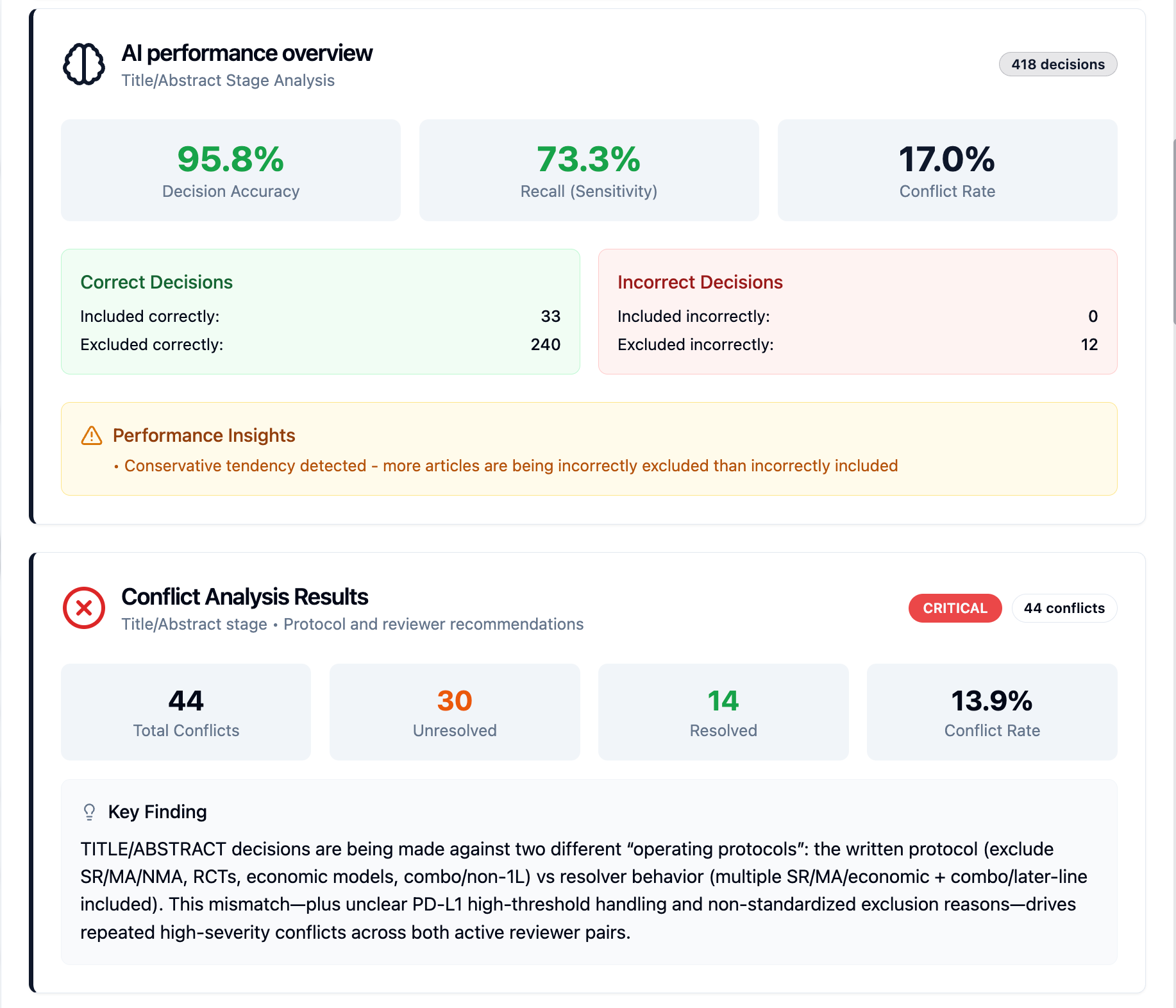

The Conflict Analysis feature has been enhanced with three new performance metrics:

Recall

Accuracy

Conflict Rate

These additions provide deeper insight into reviewer and AI screening performance, helping teams evaluate decision consistency and overall screening effectiveness.

What’s New

1. Recall

Measures how effectively relevant studies are identified.

It represents the percentage of truly relevant studies that were correctly included.

Higher recall = fewer relevant studies missed.

Why This Matters

Ensures important studies are not excluded during screening and supports stronger methodological rigor.

2. Accuracy

Measures overall correctness of screening decisions.

It compares initial screening decisions against final resolved outcomes.

Higher accuracy = better overall decision alignment.

Why This Matters

Provides a clear performance snapshot of how closely reviewer or AI decisions align with final outcomes.

3. Conflict Rate

Measures the frequency of disagreement.

It shows the percentage of articles where screening decisions differed.

Applies to:

Reviewer vs Reviewer

Reviewer vs AI

Why This Matters

Helps identify areas requiring clearer inclusion criteria, improved calibration, or additional reviewer training.

Where to Find These Metrics

Navigate to:

Project → Conflict Analysis

The new metrics are displayed within the conflict analytics dashboard with every analysis.

How This Helps

Monitor reviewer consistency

Evaluate AI screening performance

Improve calibration between team members

Strengthen transparency in reporting

These enhancements provide measurable insights to support higher-quality and more reliable screening decisions.